Here’s the gap that should worry engineering leaders more than any single AI incident.

AI made code dramatically cheaper to produce. Boilerplate, scaffolding, internal tools, glue code, first-pass implementations. All faster. I’ve written about this before and I believe the speed is real.

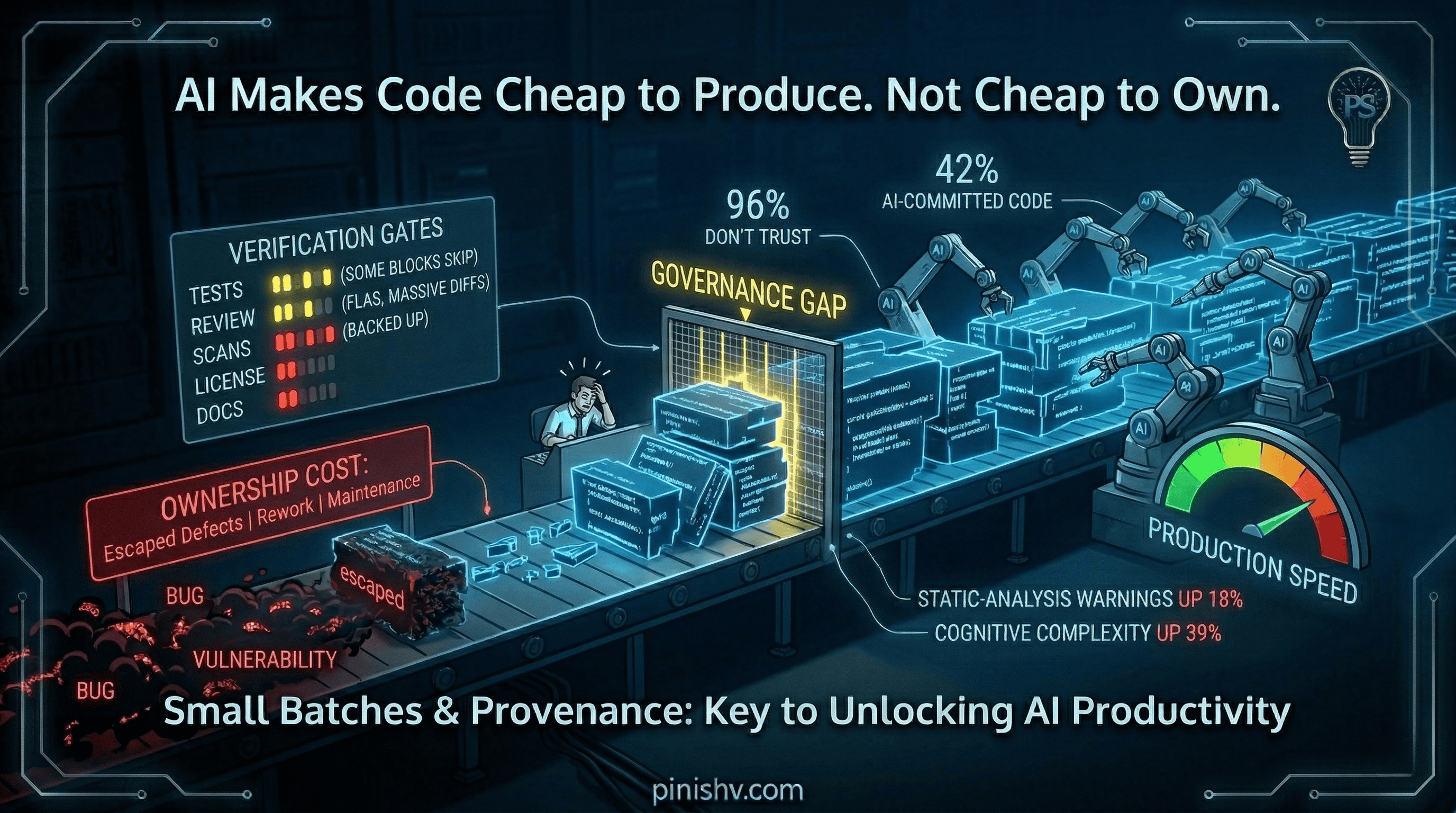

But the cost of owning code didn’t drop at the same rate. Some of those things got faster too. CI pipelines, SAST, dependency scanning, automated testing. The tooling exists. But having the tools and actually making them the focus are different things. Most teams automate the easy checks and skip the hard ones. And when code volume doubles, even the automated parts need more attention than they’re getting.

The gap between production speed and ownership capacity is where organizations get hurt.

What the data says#

Sonar’s developer survey puts numbers on it: 72% of developers who have tried AI use it daily. AI accounts for 42% of committed code. But 96% don’t fully trust the output, and only 48% say they always verify AI-assisted code before committing.

Half the code isn’t being verified by the people who committed it. That’s not a tooling problem. That’s a discipline gap.

On the security side, Veracode found risky security flaws in 45% of tests across more than 100 models. Georgetown CSET found that almost half of AI-generated snippets contained bugs that were often impactful. GitGuardian’s 2026 report detected 28.6 million new secrets in public GitHub commits in 2025, a 34% increase year over year, with AI-assisted commits leaking secrets at roughly twice the baseline.

On code quality, GitClear’s analysis found more cloned code, less refactoring, and more short-term churn. A January 2026 study on autonomous coding agents found static-analysis warnings rising 18% and cognitive complexity up 39%.

None of this says AI is useless. All of it says code production is accelerating faster than code governance.

Where it breaks#

The pattern I keep seeing looks the same across organizations.

AI generates code quickly. The PR looks good. The tests pass (if there are tests). The review is fast because the diff is large and the reviewer is busy. It ships. It works. For now.

Three months later, someone needs to modify that code and can’t understand it because nobody on the team wrote it in a way they’d naturally reason about. Or a dependency it pulled in has a vulnerability. Or a license obligation nobody noticed is now a legal question. Or the secrets it embedded are in a log somewhere.

The cost doesn’t show up at generation time. It shows up at ownership time. And by then, the team that generated it has moved on to the next sprint.

DORA’s 2025 AI report found a negative relationship between higher AI adoption and delivery stability. Their recommendation is one of the oldest engineering lessons: small batch sizes. AI can generate massive blocks of code that are hard to review and test. Small batches plus strong automated testing are the counterweight.

What to change#

Same gates for all code. AI-generated code goes through tests, review, linting, SAST, dependency scanning, secret scanning, and license checks. No exceptions. The standard is “would we be comfortable owning this in production?”

Small batches, always. Resist the temptation to let AI generate a 500-line PR. Break it up. Review it in pieces. The speed gain from generation is worthless if it creates a review and maintenance bottleneck downstream.

Track provenance. If you can’t answer what third-party components entered through AI, what licenses apply, and who owns the output, you don’t understand what you shipped.

Measure ownership, not output. Escaped defects. Rework rate. Time-to-understand for someone new. Rollback frequency. These tell you whether code is owned, not just produced.

Budget for the ownership layer. If your team is spending 80% of its capacity generating code and 20% on everything else, flip that conversation. The generation is the cheap part now. The ownership is where the investment needs to go.

The one-line version#

AI made the first draft cheap. It didn’t make the second year cheap. Plan accordingly.

How is your team handling the gap between code production speed and governance capacity? I’d love to hear what’s working. Find me on X or Telegram.