Something shifted in the last year and I don’t think enough people are talking about it honestly.

“Ship fast with AI” became the default expectation. Not just in one company. Everywhere. I hear it in conversations with other engineering leaders, I see it in open source repos, I notice it in how people talk about engineering work online. The assumption is that if you’re not shipping faster with AI, you’re falling behind. And if you push back, if you slow down to ask whether anyone actually understands what shipped, you look like you’re blocking progress.

Engineering discipline became a career risk. That’s the shift.

Not because AI is bad. I’m pro-AI. I want teams using it aggressively. But the culture around it drifted somewhere dangerous: we started treating speed as proof of quality, and nobody corrected the mistake because the dashboards looked great.

How the rot works#

Here’s the chain reaction I keep seeing play out.

It starts with the culture. Leadership sets the tone: adopt AI, move faster, ship more. That’s reasonable. AI does make the mechanical parts of software cheaper. Boilerplate, scaffolding, migrations, glue code, first-pass implementations. All dramatically cheaper now. One strong engineer with the right tools can burn through work that used to take days. That part is real, and teams that ignore it are choosing to be slower for no reason.

But then the culture starts rewarding output over understanding. The engineer who ships three features in a sprint looks more productive than the one who shipped one but thought deeply about failure modes, tested edge cases, and refactored the interface. The first engineer gets praised. The second one gets asked why they’re slower than their peers.

That’s where discipline starts to erode. Not because engineers are lazy. Because the system is telling them that slowing down to think is unproductive. The incentive points at speed, so speed is what you get.

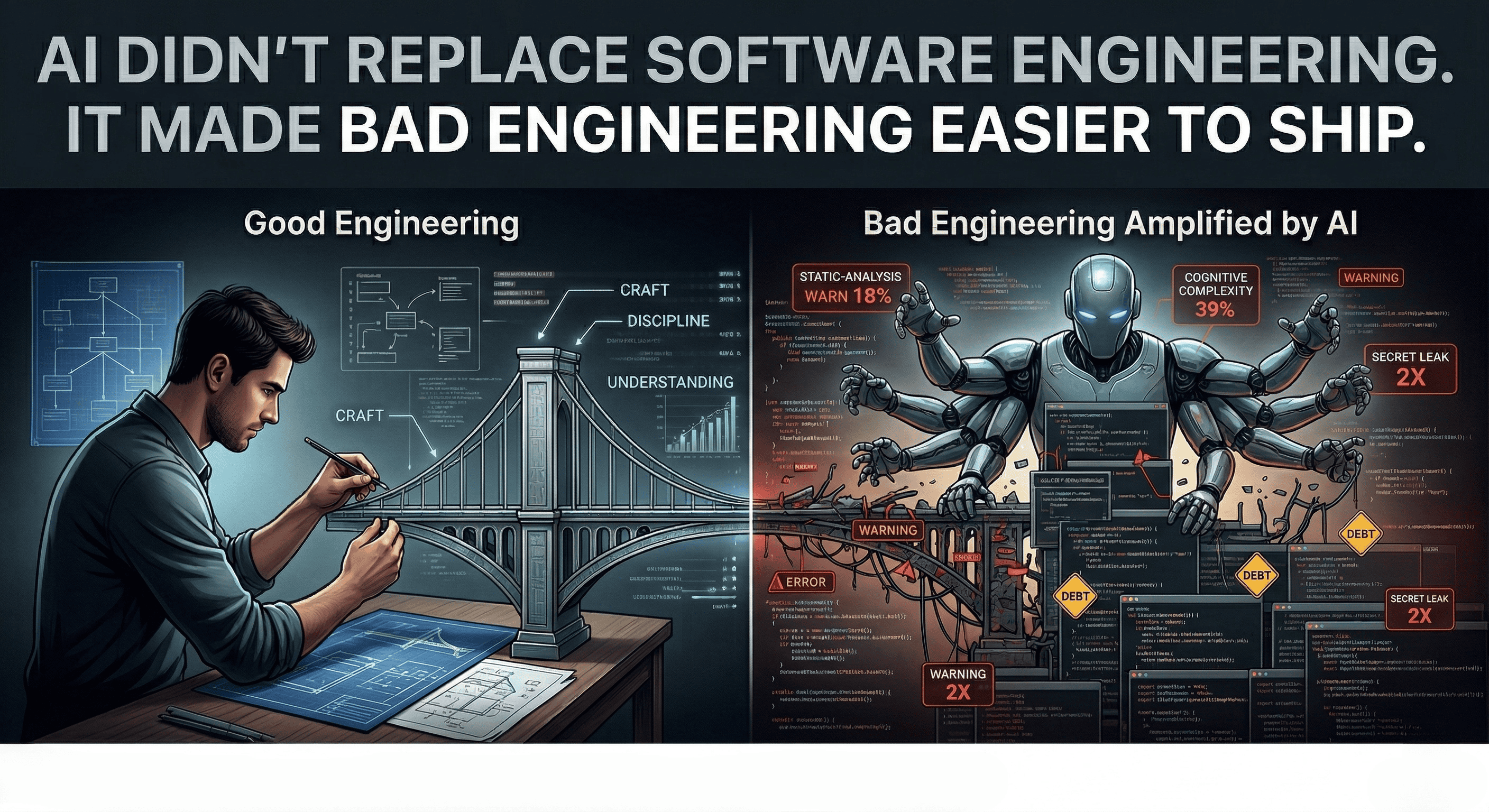

And the quality problems follow, quietly. A January 2026 study on autonomous coding agents found static-analysis warnings rising 18% and cognitive complexity increasing 39%. The researchers called it “sustained agent-induced technical debt even when velocity advantages fade.” That maps exactly to what I see: the code looks fine on the surface, the PR gets approved, the feature ships, and the complexity accumulates in places nobody is watching.

On the security side, recent data shows AI-assisted commits leak secrets at about 2x the baseline rate. Not because the tool is broken. Because humans under time pressure make worse decisions. Speed without discipline creates exposure.

Meanwhile, nobody connects the dots. The velocity charts are green. The sprint burndown looks healthy. But the on-call rotation gets heavier. Rollbacks creep up. The feature that shipped in two days takes two weeks to debug. The Plandek 2026 benchmarks across 2,000+ teams confirmed the pattern at scale: as coding speeds up, the bottleneck just shifts downstream to review, testing, and integration. The slow teams are still slow. They’re just slow in different places now.

And the skill problem compounds it. Anthropic ran a randomized trial where developers learning a new library with AI scored 17 percentage points lower on mastery than those who learned without it. The largest gap was in debugging. The exact skill you need most when AI-generated code breaks. If your engineers aren’t building real understanding, you’re growing people who can ship fast but can’t fix what they shipped.

The whole chain is connected. Culture rewards speed. Speed without understanding produces fragile systems. Fragile systems produce incidents. Incidents expose the gap. But by then, the culture has already moved on to the next sprint.

The perception gap#

Here’s what makes this so hard to catch from the inside.

Last year, METR ran a study where experienced developers using AI were measured at 19% slower, while believing they were 20% faster. When they tried to rerun it with better tools, developers refused to participate if it meant working without AI. They’re now redesigning the entire experiment because measuring this honestly is harder than anyone expected.

I don’t think the specific numbers matter as much as the pattern: people feel faster. The feeling is real. But feeling and measurement aren’t the same thing. And in a culture that rewards feeling fast, nobody wants to be the person who says “slow down, let’s check.”

Thoughtworks landed on something important in their February 2026 retreat: AI is actually increasing cognitive load, not reducing it. More output, more concurrent problems, more decisions to make. Same human judgment capacity.

Stack Overflow has been tracking what they call the AI trust gap: adoption keeps climbing, trust keeps falling, and the top developer frustration is “almost-right” code that takes longer to verify and fix than it saved to generate.

Everyone knows this. Nobody wants to be the one who says it out loud, because the culture has made saying it feel like resistance.

Most AI agendas are still theater#

This is the part where I’m going to annoy some people.

A lot of company “AI strategies” aren’t strategies. They’re tool rollouts with executive branding. Buy licenses. Mandate adoption. Count prompts. Celebrate throughput. Post a screenshot in the all-hands. Hope quality survives.

That’s not transformation. That’s procurement.

If your AI agenda starts with “every engineer must use Tool X” and ends before you redesign review standards, testing expectations, security boundaries, knowledge capture, and learning paths for junior engineers, then all you did was change the keyboard.

You didn’t modernize engineering. You industrialized guesswork.

And if the KPI you’re showing upstairs is “percentage of code written by AI”?

That’s one of the dumbest vanity metrics engineering has ever produced.

I don’t care how much code the model wrote. I care whether we understand what we shipped. I care whether it survives production. I care whether the team is getting better, not just faster.

Simon Willison drew the right line between vibe coding and what he now calls “agentic engineering.” If you reviewed, tested, and understood the AI-written code, that’s still software development. The production-grade version of working with AI raises the bar for tests, planning, docs, automation, QA, and review. It doesn’t lower it.

The problem is that a lot of teams adopted the speed without adopting the bar.

What actually needs to change#

I’ve written before about how the work sequence shifts and what to do about junior engineers in earlier articles. This piece is about the organizational layer, because that’s where the failure is concentrated right now.

Separate prototype mode from production mode. Loose AI prototyping is great for throwaway experiments. It doesn’t belong anywhere near money, customer data, security boundaries, or core workflows.

Make AI transparency normal. If a change was heavily AI-assisted, say so. Show the verification path. Reviewers should know whether they’re looking at a handcrafted change, an AI-assisted draft, or an agent-produced branch. Different creation paths deserve different scrutiny.

Review decisions, not just diffs. Ask why this approach exists. What breaks first. What alternatives were rejected. What do we monitor. If your review culture is still optimized for nit-picking while AI is generating whole subsystems, your process is in the wrong decade.

Measure what matters. Escaped defects. Rework rate. Rollback frequency. MTTR. Time-to-understand for someone new in the codebase. A green AI usage dashboard isn’t evidence that your architecture got better.

Stop rewarding speed without understanding. This is the culture change that matters more than any tool or process. If your system promotes the engineer who ships fastest and ignores the one who catches the architectural flaw before it ships, you’re building the wrong incentives for the AI era.

But honestly? The most important thing you can do isn’t on this list.

Sit down with your team and have an honest conversation about what you actually understand versus what you shipped. Not a retro. Not a metrics review. A real conversation. What did we ship this month that we could confidently debug at 2 AM without the AI? What would break if the model hallucinated something subtle? Where are we trusting output we haven’t verified?

If that conversation is uncomfortable, good. That’s the conversation that needed to happen three months ago.

The craft didn’t change#

AI changed the toolkit. It changed the speed of first drafts. It changed the sequence of when work happens. It changed how much mechanical effort one good engineer can burn through in a day.

But it didn’t change the craft.

We’re still in the business of turning ambiguity into reliable systems. Still responsible for the trade-offs. Still accountable when the thing breaks. Still need people who understand architecture, testing, operations, failure modes, and human consequences.

The teams that win won’t be the ones that generate the most code. They’ll be the ones that still know what good engineering looks like when the machine gets loud. The ones where discipline isn’t a career risk. The ones where slowing down to think is treated as engineering, not obstruction.

The tool changed. The accountability didn’t.

What’s the worst AI-caused quality problem you’ve seen? Not a hypothetical. A real one. I’d genuinely like to hear it. Find me on X or Telegram.

Disclaimer: This article references specific companies, products, research studies, and industry analyses for illustrative and educational purposes. Information is based on publicly available sources including METR, Plandek, Anthropic, GitClear, GitGuardian, Stack Overflow, and Thoughtworks reporting, available at the time of writing. I have not independently verified all claims. The analysis and opinions expressed are my own. I have no financial interest, business relationship, or affiliation with any companies mentioned. This is commentary, not investment, legal, or business advice.