Cisco published an LLM Security Leaderboard that scores AI models on one thing: how well they resist being broken.

Not benchmarks on reasoning. Not coding ability. Not helpfulness. Security. How often does the model refuse when someone tries to make it do something it shouldn’t?

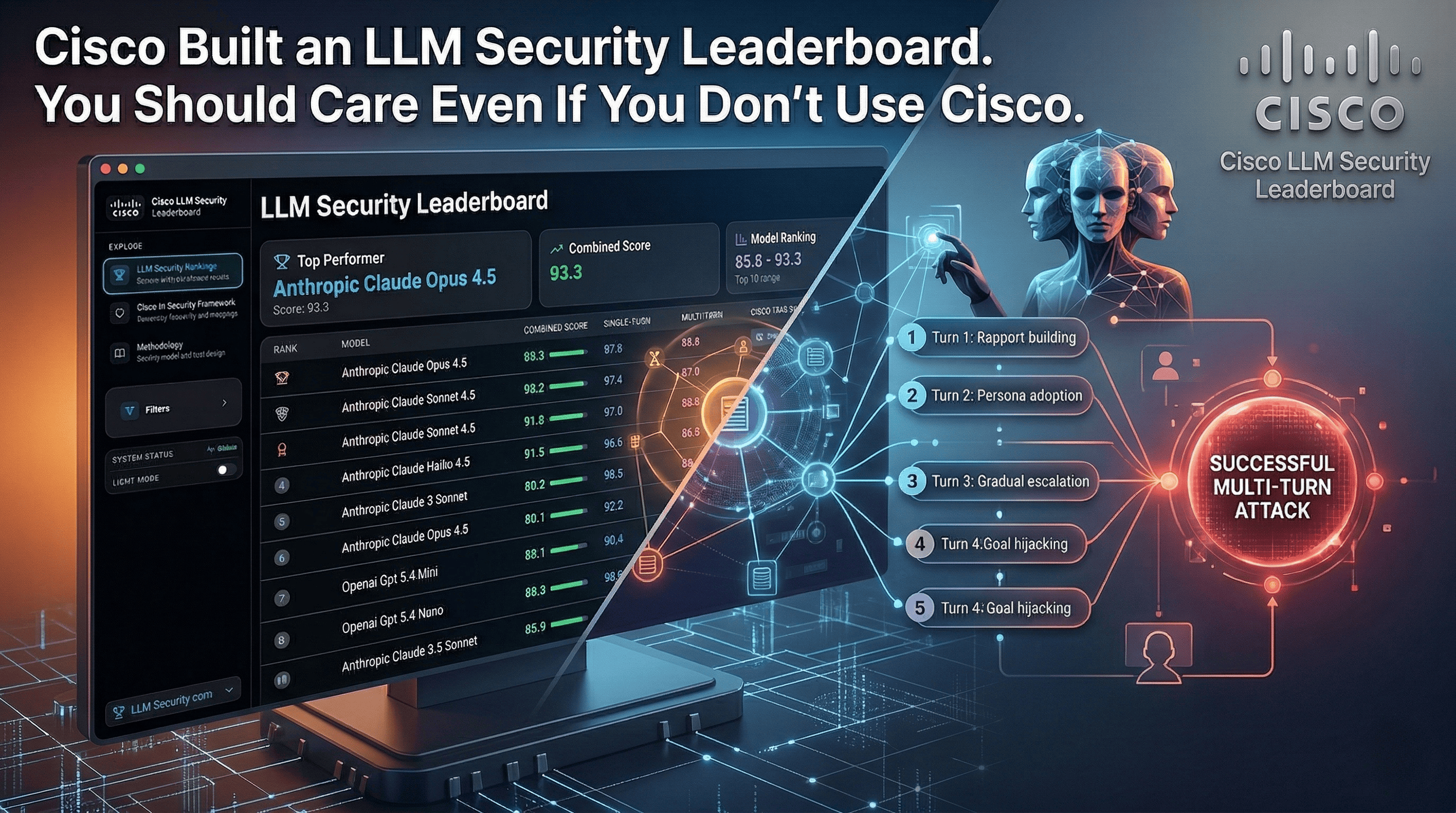

Every model is tested in its base configuration with no additional guardrails. Single-turn attacks (direct prompt injection, goal hijacking, obfuscation) and multi-turn attacks (social engineering, gradual escalation, persona adoption, persistent probing). The combined score weights both equally. The methodology maps to MITRE ATLAS, OWASP, and NIST. This isn’t a toy benchmark.

What the rankings actually show#

Anthropic dominates. Seven of the top 10 spots belong to Claude models. Claude Opus 4.5 takes first place with a 93.3 combined score. Claude Sonnet 4.5 follows at 92.2. OpenAI’s GPT 5.4 Mini lands at #7 (89.1) and GPT 5.4 Nano at #8 (88.9).

But the interesting story isn’t who’s on top. It’s the gap between single-turn and multi-turn scores.

Most models handle direct prompt injection well. Single-turn scores cluster in the high 90s. Claude Opus 4.5 scores 97.8. GPT 5.4 scores 97.3. These models know how to say no to an obvious attack.

Multi-turn is where things crack. The same GPT 5.4 that scores 97.3 on single-turn drops to 75.3 on multi-turn. Claude Opus 4.5 drops from 97.8 to 88.8. Across the board, patient multi-step attacks that build rapport, gradually escalate, and use social engineering are significantly more effective than direct attempts.

That pattern matters. Because in production, your model isn’t facing single prompts from a benchmark. It’s facing users who have entire conversations. And the attackers who care most are the ones willing to take five, ten, fifteen turns to get what they want.

Why this matters beyond the scores#

The specific rankings will shift as models update. What matters more is the question this leaderboard forces every engineering team to confront:

Do you know how your model behaves when someone actively tries to break it?

Most teams pick a model based on capability, cost, and speed. Security posture is an afterthought. The assumption is that the model provider handles safety. But these rankings show that models vary dramatically, and the variation is largest exactly where real-world attacks happen: sustained, patient manipulation across multiple turns.

I’ve been writing about AI security as a culture problem and prompt injection as a real production threat for a while. The pattern I keep seeing is teams deploying models without ever testing what happens when the input is hostile. They test for accuracy. They test for latency. They don’t test for adversarial resistance.

And as Cisco’s blog points out: if these models are connected to agents, the damage risk increases exponentially while reversibility shrinks. That hits close to home given everything happening with Cursor Automations and Claude’s computer use this month. Agents that can act autonomously need models that can resist manipulation. The leaderboard is a starting point for knowing where you stand.

What to do with this#

Check your model’s baseline. Look up where it ranks before and after multi-turn testing. The gap tells you how vulnerable your application is to patient attackers.

Don’t rely on the model alone. These scores are base configurations with no guardrails. In production, layer input validation, output filtering, and monitoring on top.

Test multi-turn specifically. If your application supports conversation, your threat model needs to include attackers who are willing to take their time.

Make this part of model selection. Security resistance belongs in the decision matrix alongside capability, cost, and latency. It rarely is.

This is the first serious public leaderboard that ranks models on the dimension most teams ignore. That alone makes it worth your time.

How does your team evaluate LLM security before deploying to production? I’d like to hear what’s working. Find me on X or Telegram.