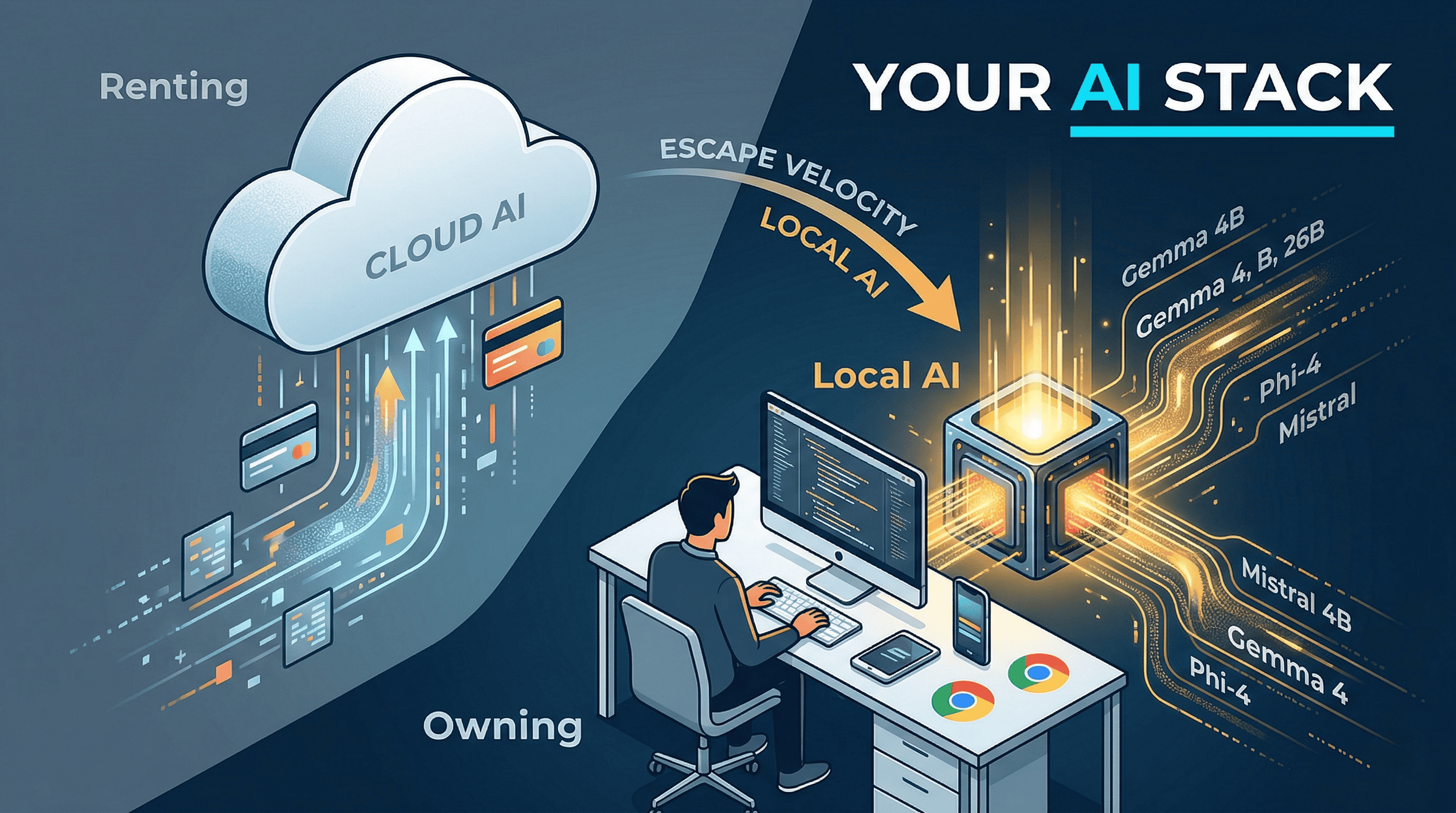

When Anthropic tells paying Claude Code subscribers that OpenClaw and other third-party harnesses need separate pay-as-you-go billing starting April 4, that’s not just a pricing update. That’s platform risk made visible. If your workflow depends on someone else’s limits, economics, and tolerance for power users, your stack is rented.

At almost the same moment, Google dropped Gemma 4 under Apache 2.0 across phone-to-workstation tiers. Over 400 million downloads of the Gemma family so far. This isn’t a niche hobbyist corner anymore.

One story is about dependence. The other is about escape velocity.

Local finally crossed the line#

For a long time, “run it locally” meant weaker models, ugly tooling, and a lot of compromises. You got privacy but gave up capability.

That’s changing fast. The model layer is better. The runtime layer is better. And the quality-to-hardware ratio finally crossed from “cute demo” to “actually useful.”

The mistake people make is treating local LLMs as a single category. They’re not. There are now three very different tiers:

Phone and tablet. Gemma 4’s smallest models (E2B at ~3.2GB, E4B at ~5GB) run on mobile through Google’s AI Edge Gallery. Microsoft’s Phi-4-mini targets mobile CPUs with ONNX builds. Hugging Face’s SmolLM2 is built for on-device from the start. Not your frontier coding copilot. But credible for summarization, drafting, classification, and offline assistance.

Laptop. The 4B to 8B class is the sweet spot. Qwen3-4B with switchable thinking modes, Phi-4-mini for compact reasoning, Ministral 8B for edge setups. Real assistants on normal hardware.

Workstation and higher-memory Macs. This is where local stops being a privacy story and becomes a control story. Mistral Small 3.1 runs on a single RTX 4090 or a 32GB Mac. Gemma 4’s 26B and 31B models are realistic for workstation setups. Qwen3-30B-A3B has 30.5B total parameters but only 3.3B activated per token, which is exactly the kind of design that makes local deployment attractive.

And the tooling caught up. Gemma 4 is already in Ollama. LM Studio keeps pushing the “download and run” workflow. Microsoft has ONNX Runtime and Foundry Local for Phi. The gap between “model exists” and “normal person can run it” is closing fast.

What local doesn’t do#

Local isn’t magic and I don’t want to romanticize it.

You still give up raw frontier capability. You give up some convenience. You give up the giant context windows and web-connected workflows that cloud models handle more naturally. On mobile, you fight battery and heat. A phone can run a model. That doesn’t mean you want it thinking for three minutes over a giant prompt while your battery melts.

The local story is strongest around focused workloads: summarization, extraction, drafting, classification, translation, private notes, offline copilots, and first-pass coding help.

So no, local doesn’t mean “replace Claude, ChatGPT, and Gemini everywhere.” That’s the wrong goal.

The right goal is to stop letting every useful AI workflow become a monthly lease tied to someone else’s pricing model, product roadmap, and policy mood.

Why the Anthropic move matters more than people think#

Everyone repeats the privacy argument for local models. Fair enough.

The stronger argument is operational.

If a vendor can wake up on Friday and tell you that a workflow you built around is no longer covered by the subscription you’re already paying for, then “works today” isn’t the same thing as “belongs in your stack.”

Anthropic’s move may be rational. If third-party harnesses blow past the economics of a flat subscription, of course they’ll tighten the terms. That’s what platforms do. I wrote about this pattern when I was looking at AI wrappers, and again when I argued the SaaS bargain is breaking. Platform providers always move up the stack eventually.

Local gives you a floor the platform can’t take away.

That floor doesn’t need to be frontier-grade to be strategically valuable.

It just needs to be yours.

What I’d actually run today#

If I wanted a phone-first local assistant: Gemma 4 E2B/E4B first, then Phi-4-mini for reasoning-heavy tasks.

If I wanted a good local model on a normal laptop: Qwen3-4B, Phi-4-mini, or Ministral 8B.

If I had a 32GB Mac or stronger desktop: Mistral Small 3.1 and Gemma 4 26B.

If I had a 24GB GPU and wanted the best local jump in capability: Gemma 4 31B and Qwen3-30B-A3B.

That’s not a benchmark answer. It’s a deployment answer.

For two years, local LLMs mostly meant compromise. In 2026, they increasingly mean options. The frontier cloud models are still stronger. But that’s no longer the only question that matters.

The real question is: which parts of your AI stack are you still comfortable renting?

Running local models? I’d love to hear what you’re using and where. Find me on X or Telegram.