“I only built a small local script for myself.”

That sentence, from a well-intentioned engineer who just wanted to automate something tedious, might be the most dangerous thing happening inside your organization right now.

Not because the engineer is malicious. Because AI changed what one person can do in an afternoon. And the organization’s controls weren’t built for that.

The old version of this problem#

Shadow IT has been around forever. Someone signs up for a SaaS tool with their personal email. A team spins up an AWS instance outside the approved account. A developer installs an unsanctioned browser extension. IT security has been playing whack-a-mole with this for decades.

But the old version had natural friction. Building useful software took time. One person couldn’t do that much damage alone because one person couldn’t build that much alone.

AI removed that friction.

What shadow AI actually looks like#

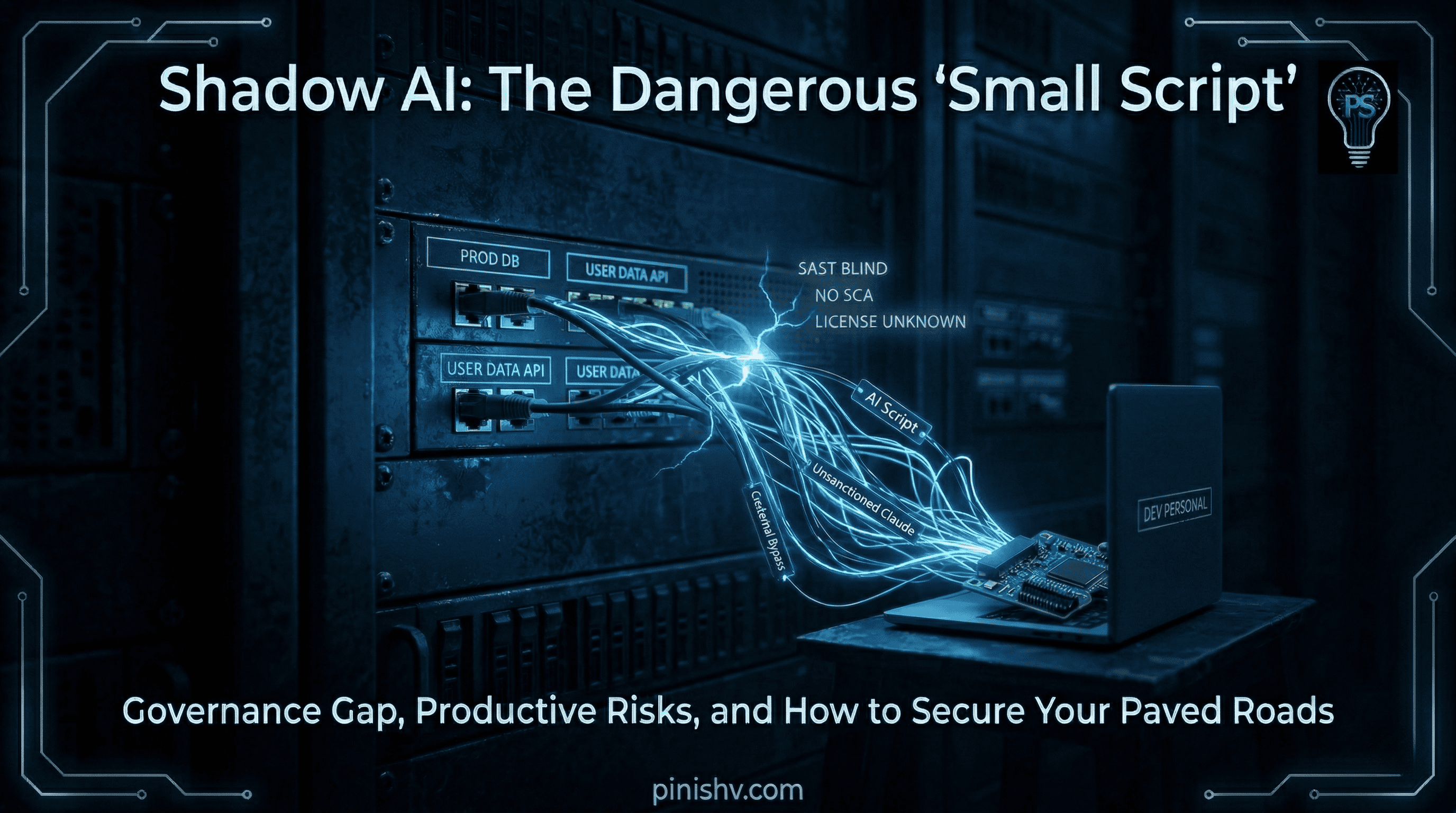

An engineer uses their personal Claude or ChatGPT account to build an internal tool. They don’t think of it as shadow AI. They think of it as being productive. The tool works. It saves the team time. Everyone’s happy.

But that tool may touch production credentials. It may pull in five packages nobody approved. It may embed an API key. It may process customer data. It may send data to an AI provider through a personal account with consumer-grade privacy terms. It never goes through SAST, SCA, secret scanning, license review, or architecture review.

Sonar’s developer survey says 35% of developers access AI coding tools through personal accounts rather than work-sanctioned ones. GitHub’s code scanning analyzes code in a repository. If the code never makes it to a repository, those controls are blind.

One person. One afternoon. Zero oversight. And because AI made them productive enough to actually ship something useful, nobody questions it until something breaks.

Why this is different from old shadow IT#

The old shadow IT problem was someone using Dropbox instead of SharePoint. Annoying, but contained.

Shadow AI is someone building a tool that connects to production databases, processes customer records, calls external APIs, and runs on a schedule. In a day. Without anyone knowing.

The blast radius is completely different. And the speed means it happens before governance can react.

I wrote about the Claude Code leak this week. That was a packaging mistake at Anthropic. But the shadow AI version of that story plays out in organizations every day. Not as a public incident. As a quiet accumulation of unmanaged code touching systems the company is responsible for.

What to actually do about it#

Sanction the tools, not just the behavior. Give teams approved AI accounts with enterprise privacy terms. If they’re going to use AI regardless (and they will), make the sanctioned path easier than the personal one.

Make the paved road the fastest road. If using the official repo, the official CI pipeline, and the official review process is slower than doing it solo with a personal AI account, people will keep going solo. Fix the incentive.

Scan for what you don’t know about. Look for patterns: API keys in places they shouldn’t be, services calling external endpoints you didn’t approve, code repos that appeared outside your org’s GitHub or GitLab. The stuff you don’t know about is the stuff that hurts.

Talk about it openly. The problem isn’t that employees want to be productive. The problem is unmanaged productivity touching systems the organization is responsible for. Frame it that way. Not as a crackdown. As a boundary.

The real issue#

Nobody is building shadow AI to cause problems. They’re building it because AI made them capable of solving problems nobody else was solving for them. That’s a sign of a motivated team. It’s also a sign that your official tooling and processes aren’t keeping up.

The fix isn’t to ban AI. It’s to make the managed path so good that nobody needs to go around it.

Dealing with shadow AI in your organization? I’d love to hear how you’re handling it. Find me on X or Telegram.